Introducing “The Problem”

A large steel producer in Northwest Indiana (Client) recently had a legacy issue concerning one of its blast furnaces. Client noticed the furnace’s tuyeres (the holes through which air is forced into the blast furnace) were changing shape, going from cylindrical to ellipsoid. Client was concerned that the entire structure was slowly collapsing or shifting towards a point of structural failure.

To say the least, the problem was high profile.

The Call for Help

Survey crews from DLZ’s Burns Harbor office were asked to collect data on as much of the furnace’s over 100’ tall and nearly 50’ diameter shell as possible, and analyze the data in order to better understand how much the furnace was moving.

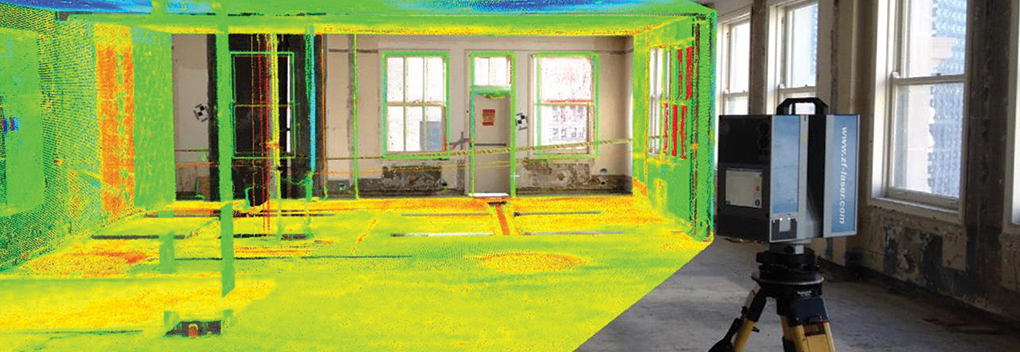

Using a Leica P40 high definition 3D laser scanner over the course of a 10-hour work day, while the furnace was shut down for routine maintenance, two DLZ survey crews were able to get a baseline set of data.

In total, the initial set of data approximated 65gb (2.5 billon) individual data points.

The challenge was not analyzing the data a single time. The challenge was having a way to analyze the data multiple times quickly and accurately. Additionally, the furnace is subject to normal movement, and that needs to be accounted for.

Leveraging Computing Power to Process Data

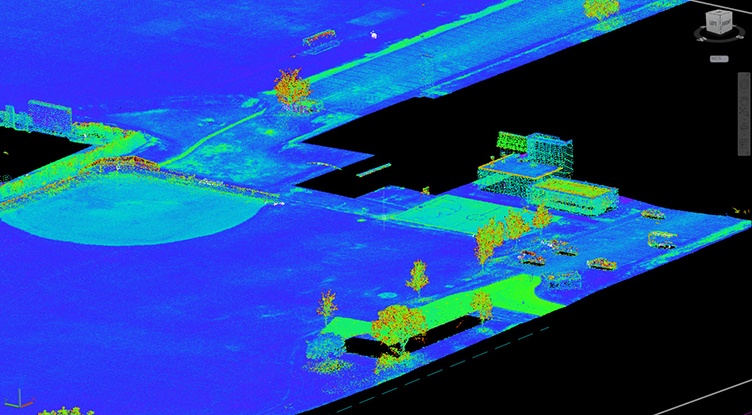

After registering (the process of “stitching” together multiple sets of data) the laser scan data, the rest of the processing was turned over to a computer algorithm. The algorithm parsed the data based on a radial grid, and reduced the 2.5 billon points down to just 20,000 representative monitoring points.

A second algorithm was written to find and analyze those same 20,000 points from any subsequent dataset.

Understanding the Audience

While engineers, surveyors, and other technical-minded professionals are comfortable with, and often prefer, numbers and raw data, a set of 20,000 points is just too large to properly comprehend, and the high-profile nature of the project involved several non-technical decision makers. With these considerations in mind, the priorities for project deliverables were as follows:

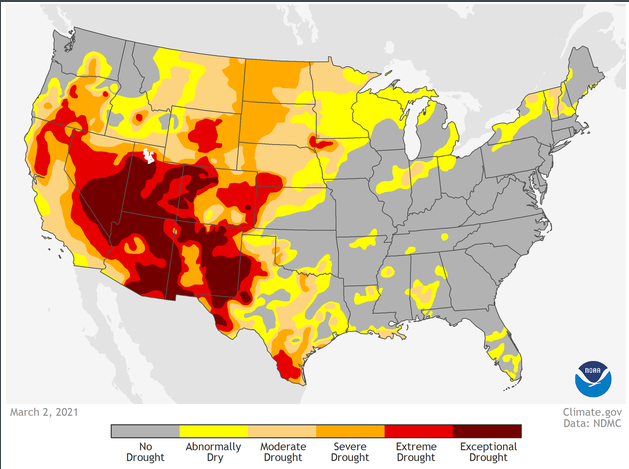

- Visuals > Numbers

With so many individual data points it is nearly impossible to gain an accurate understanding of the structures movement from tables and charts alone. To help this problem, a colormap was created to represent deviations with color instead of with numbers. - Speed of Interpretation > Resolution of Results

By using colors to represent deviations the reader loses the ability to know the exact deviation at any given location. The tradeoff is the reader gains the ability to interpret the entire data set quickly as colors form trends and are represented in a picture. - Solution > Interpretation (in regard to communication)

The visualizations resulted in a quick, consistent interpretation of the data by multiple readers. This allowed the focus of the group to be on solutions rather than spending a large amount of time trying to understand, interpret, or debating the meaning of the data.

Visualizing and Presenting the Results

Ultimately the project deliverables were a 2D colormap and a 3D model, which illustrated that the furnace did show both movement and change in shape, but not enough to cause concern. After only a 10-minute conversation about DLZ’s findings, the discussion was able to move to solutions, which meant the collected data had been presented in a meaningful way that the client understood.

The Project Going Forward

Based on the results of DLZ’s analysis, the client decided to continue monitoring the blast furnace bi-monthly to ensure the structure remains in a normal range of fluctuation. At the same time, various reinforcements and materials will be tested to see how they might influence the shape of the tuyeres and movement of the structure.

The client was able to choose preventative maintenance instead of expensive, unnecessary measures only because of the quick turnaround enabled by the use of algorithms over manual point cloud analysis to rapidly determine the scope of the problem.

Unexpected Takeaway

When a project’s budget starts to dwindle, billable client interactions are usually the first on the chopping block. With competition always becoming more of a factor in the bidding processing, budgets are tighter than ever. Leveraging algorithms on this project provided quick answers and freed up valuable hours to allow for early additional client interaction with more face-to-face meetings and discussions than otherwise would have been possible.